Microsoft has unveiled a new small language model (SLM) designed to help developers build multimodal AI applications that can operate efficiently on lightweight computing devices. The company emphasizes that this model can process speech, vision, and text locally on-device, consuming significantly less computational power than previous iterations.

The Rise of Small Language Models (SLMs)

While much of the innovation in generative AI has been centered around large language models (LLMs) that require extensive computational resources and cloud-based infrastructure, there is growing interest in developing smaller, more efficient models. These small language models (SLMs) are designed to function effectively on resource-constrained devices, including smartphones, laptops, and various edge computing devices. By enabling AI applications to run on-device, SLMs help reduce latency, enhance privacy, and improve accessibility for a wider range of applications.

Microsoft's latest contribution to the SLM space comes in the form of its Phi family of models. In December, the company introduced the fourth generation of these models, and now it is expanding the lineup with two new additions: Phi-4-multimodal and Phi-4-mini. These models are available via Azure AI Foundry, Hugging Face, and the Nvidia API Catalog under the MIT license, making them accessible to a broad range of developers and enterprises.

Phi-4-Multimodal: A Versatile AI Model for Speech, Vision, and Text

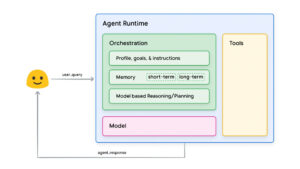

Among the latest additions to the Phi family, Phi-4-multimodal stands out due to its capability to process speech, vision, and language simultaneously. This model is built with 5.6 billion parameters and employs the mixture-of-LoRAs technique. LoRAs, or Low-Rank Adaptations, are a method used to enhance a language model's performance for specific tasks without requiring extensive fine-tuning of all its parameters. Instead, LoRA allows developers to introduce a small number of new weights into the model, training only these additions. This approach improves efficiency, reduces memory usage, and results in a model that is lightweight, easy to store, and shareable.

By leveraging these optimizations, Phi-4-multimodal delivers low-latency inference and is well-suited for on-device execution, ensuring minimal computational overhead. This makes it an ideal choice for various applications, including:

- Running AI models locally on smartphones without requiring constant cloud connectivity.

- Enhancing in-car AI assistants by providing real-time, multimodal interactions.

- Supporting lightweight enterprise applications, such as multilingual financial services platforms.

Industry Reactions and Use Case Potential

Industry analysts have noted the significance of Microsoft's introduction of Phi-4-multimodal, particularly for developers looking to create AI-based applications for mobile and resource-constrained devices.

Charlie Dai, Vice President and Principal Analyst at Forrester, highlighted the importance of the model's multimodal capabilities, stating, "Phi-4-multimodal integrates text, image, and audio processing with strong reasoning capabilities, enhancing AI applications for developers and enterprises with versatile, efficient, and scalable solutions."

However, Yugal Joshi, Partner at Everest Group, pointed out that while these SLMs can be deployed across compute-constrained environments, mobile devices may not always be ideal for implementing most generative AI use cases. Despite this, he views the Phi-4 models as a step forward, noting that Microsoft appears to be drawing inspiration from companies like DeepSeek, which are also working to reduce reliance on large-scale compute infrastructure.

Performance and Benchmarking

In terms of performance, Phi-4-multimodal demonstrates both strengths and areas for improvement. It has a performance gap when compared to competitors such as Gemini-2.0-Flash and GPT-4o-realtime-preview in speech question answering (QA) tasks. Microsoft attributes this to the smaller size of the Phi-4 models, which inherently limits their capacity to retain factual question-answering knowledge. However, the company has stated that ongoing development efforts aim to address this limitation in future iterations.

Despite this shortcoming, Phi-4-multimodal excels in several key areas. It outperforms well-known LLMs, including Gemini-2.0-Flash Lite and Claude-3.5-Sonnet, in mathematical and scientific reasoning, as well as optical character recognition (OCR) and visual science reasoning tasks. This makes the model particularly useful for applications that require strong analytical and reasoning capabilities rather than solely relying on factual recall.

Phi-4-Mini: A Compact Yet Powerful Model

Alongside Phi-4-multimodal, Microsoft has also introduced Phi-4-mini, a more compact model with 3.8 billion parameters. Phi-4-mini is based on a dense decoder-only transformer architecture and supports sequences of up to 128,000 tokens. This makes it a versatile model that balances efficiency with the ability to process long-form text and perform complex tasks with reduced computational demand.

The Future of Small Language Models

The release of Phi-4-multimodal and Phi-4-mini signals a broader trend in AI development: the shift towards creating efficient, scalable, and versatile models that can function across various environments, including edge devices. As AI technology continues to evolve, the demand for models that can run efficiently on-device will likely grow, enabling more privacy-conscious and responsive AI applications.

Microsoft’s commitment to making these models openly available through multiple platforms ensures that developers and enterprises have access to cutting-edge AI capabilities while minimizing infrastructure requirements. As further optimizations and improvements are made, small language models like the Phi-4 series could play a crucial role in expanding AI’s reach beyond high-powered cloud environments, bringing powerful AI-driven experiences to everyday devices.

With continued advancements, it is expected that these small yet powerful models will unlock new possibilities for AI applications in industries ranging from finance and healthcare to automotive and consumer electronics. Microsoft's latest developments in SLMs represent a significant step toward a future where AI is more accessible, efficient, and seamlessly integrated into daily life.

Validate your login

Sign In

Create New Account